Mind and Iron: The Europeans will save us from AI?

Also, a Silicon Valley subculture that is hilarious-dangerous. And the Turing Test, the new ACT.

Hi and welcome back to Mind and Iron. I’m Steve Zeitchik, veteran of The Washington Post and Los Angeles Times and head dispatcher at this all-night taxi company.

Speaking of, a shout-out to the people we’ve been seeing at holiday parties in NYC over the past week. Your support and readership are both extremely appreciated.

Our mission here at Mind and Iron is to examine the tension — and find the harmony — between cold machines and warm humanity. We’re glad so many of you are along for the journey.

Hit the subscribe button here if you’re not already on our list.

And a quick renewal of our holiday push for subscription pledges. Writing about the future is fun but not yet profitable. So please consider committing a few dollars. It will get you all the Mind and Iron features as we move to a paid model in the new year and keep our mission alive besides.

The parties may be a’blazing but that doesn’t mean the news is a’stopping. The European Union passed its “AI Act” last Friday, and it’s a doozy. We dive in to tell you all you need to know. Also, there’s a Silicon Valley subculture called e/acc (pronounced “ee-ack;” catchy), and it’s a little more treacherous than it might seem.

Also you may have heard about the Turing Test, Alan Turing’s midcentury theory that a machine will truly have achieved intelligence when it can trick a human into thinking it’s not a machine. Computers either hurdled over the mark years ago — or the test has yet to be passed. Two AI giants hash out the issue in the public sphere/the dimly lit work-spaces where intellectual life now takes place. (The answer surprisingly…kinda matters?)

First, the future-world quote of the week:

“The EU AI Act is a global first — a unique legal framework for the development of AI you can trust [that secures] the safety and fundamental rights of people and businesses.”

—EU Commission president Ursula von der Leyen, touting what the EU just did

Let’s get to the messy business of building the future.

IronSupplement

Everything you do — and don’t — need to know in future-world this week

How the EU is actually getting it right on AI; Turing tests everywhere; yacking about e/acc

1. A FEW WEEKS AGO WE TOLD YOU ABOUT THE CONFLICT BETWEEN THE e/acc MOVEMENT AND EFFECTIVE ALTRUISM, JOKING THAT NONE OF IT WOULD BE ON THE FINAL.

It still won't be on the final — these accouterments are more about a Silicon Valley subculture than anything most of us who care about the future need to worry about. But this e/acc business does at least...conceal an idea, so let's dig in a bit. (Also this week the New York Times weighs in, with a Kevin Roose column laying out the scene.)

In a paragraph: e/acc, or "effective accelerationism," began as a response to effective altruism. Effective altruists are part of a well-meaning if slightly rich-clueless movement in which the goal is to make a lot of money so you can help as many people as possible and also kinda generally think about consequences. The effective accelerationists came along to say, “well don't think about consequences," though even that reductive statement might be giving them too much philosophical credence.

Roose doesn't spell it out quite this way, but e/acc is really a union of three groups:

A. Party-til-dawn lightweights of the same ilk who ran around San Francisco during the dot-com boom and are happy to embrace this e/acc ideology because that implies the partying actually means something, man, and what better chaser to 2 a.m. substances than the illusion of substance. (Slightly bad timing since, as the NYT also noted, good-time startup money is drying up)

B. Largely white and largely male and largely Millennial libertarians who 18 months ago were shilling for crypto as the great liberator, and 18 months from now will likely have moved on to the next tech blank canvas they can project their government-wants-to-strangle-me ideology that comes from a deep immersion in no fewer than 1.5 Ayn Rand novels

C. High-powered types with a vested interested in ensuring the most rip-roaring and regulation-free world of A.I. possible, because any other outcome would interfere with their investment portfolios — or, in rare cases, with their genuine belief that unfettered A.I. will lead to a more beautiful form of consciousness (Again, this is rare)

Those first two groups give me a case of the yawns, because, well, they're the first two groups. The third group is more interesting — and fraught. It includes Sam Altman and Marc Andreessen and fellow VC kingpin Garry Tan and a startup founder named Guillaume Verdon who goes by the handle Beff Jezos — in short, people who will benefit financially from any lack of impediments. And who actually have the clout to prevent them.

But as it turns out, they are also what makes the first two groups problematic. Because the third group could use the first two groups — to make their A.I.-capitalism-at-all costs seem cool, and, more dangerously, as a cudgel to caricature those of us normal humans who are excited about AI but also want it carried out responsibly.

As we’ve noted, A.I. doesn't have to go rogue for it to be dangerous — computers with autonomous powers could give rise to all kinds of disinfo, social-illusion, brain-atrophying risks without ever going sentient and "turning on" humans. In fact, the "turning on humans" argument more often these days is heard from people like Altman as a sleight of hand/straw man precisely to distract us from those risks.

And this is where the e/acc movement becomes dangerous. Sam Altman can't well go around painting people who have sensible concerns as radicals. Its proponents, though, can get out there spreading the caricature far and wide — "People who care about A.I. safety are a bunch of paranoiacs who've seen ‘The Terminator’ one too many times," etc etc. When in truth the great majority of people who care about AI safety just want more testing for a wide range of potential risks and/or suggestions on how these risks might be mitigated (aka everything we didn’t do with social media). But trolling is fun! And it sticks!

All of this is a little depressing, since these folks now seem to have wind at their backs. The people worried about safety just got run out of the OpenAI board, and the people who are the e/acc leaders are some of the richest people in the Valley. So even as rival companies like Anthropic (and to a much lesser extent Google) are trying to throw on the occasional brake, they will generally have much less success in the face of unbridled damn-the-consequences capitalist power, and really may struggle now that such power has a fun Party-On-Wayne movement with which to conceal its human-shrugging agenda. Any tech-related industry is only as safe as its least safe participant. And these pedal-slamming e/acc activists are the least safe participants.

But I wouldn't press the panic button just yet. There are many adults working in AI who see through this veil — who realize this e/acc business is often so much crypto-style silliness that shouldn't distract us from figuring out the real risks and reacting accordingly. One of my favorite quotes in the Roose piece comes from Aidan Gomez, head of the Canadian A.I. company Cohere, which is powerful in its own right and also is basically doing things the right way.

"I liked it when it was an ironic countermovement instead of what seems to be transforming into an earnest libertarian movement,” Gomez said, which I take to mean as "it's cool to make sure people don't let their pessimism get the better of them but let's not kid ourselves there is real meaning beyond these hoary capitalist tropes.'"

The reality is that as much as there's reason to fear this headlong rush by billionaire investors and the millionaire CEOs they puppet, there are also many people in this space who will not give them a blank check no matter how many alphabet-soup movements they marshal. Which brings me to the truly big news of the past week....

2. EUROPE IS ON THE CASE.

That has been true for tech regulations for a while. Europe has digital privacy laws, for instance, that can make us all sleep easier.

But the European Union particularly distinguished itself in the last week with the passage of the “AI Act,” a comprehensive set of laws that sets the red lines within which companies and governments must work if they hope to do biz on the continent. And perhaps — don’t hold your breath — sets a standard for the U.S. in the process. (What we have right now is a question-riddled executive order.)

The headline is that a body that governs 27 member nations and 450 million people has not only managed to find consensus — but a consensus that is toothy and has a philosophical through-line. As the laws kick in over the next two years, companies that violate the AI Act can be fined as much as seven percent of their annual revenue.

With the legislation (which was two years in the making), the EU basically pivoted away from a more narrow product-safety approach of regulating AI apps to instead regulating the very companies that create the models on which all the apps are based (like OpenAI) . This approach, known as regulating “foundation” models, is far more sweeping, and it was motivated by lawmakers seeing just how powerful ChatGPT has been over the past year.

(For a while this foundation approach was resisted by officials from France, Germany and Italy, countries with a whole slew of companies that worry about falling behind the U.S. in building these models. But lawmakers largely overcame their objections.)

Which is not to say that the AI Act is perfect or avoidant of all misconceptions. Given the extensive reporting about it (the actual language has not as of this writing been released), here are four highlights among the dozens worth knowing, from one of the biggest pieces of tech legislation many of us will see in our lifetimes.

A. It bans social scoring

Remember that old “Black Mirror” episode in which a person’s popularity metric was posted above their head and they had to go through life in a most obsequious/ inauthentic way to keep it propped up and avoid becoming a pariah?

Imagine that but with AI calculating the score. And governments acting on them.

China is said to practice some version of this, feeding a person’s obedience tendencies into a machine and having it spit out a score that the government then uses for who-knows-what purpose (though it’s unclear how intrusive it really is).

The EU rightly thinks this is all noxious stuff. And even if non-authoritarian countries would be wise enough to resist this at the government level, that doesn’t mean it couldn’t be used by companies — deciding, say, which employees demonstrated enough fealty to the Man and making bonus or layoff decisions based on it.

Or schools, which could replace a more human form of student assessment with an algorithm that decided which students deserve punishment or reward. The “going on your permanent record” has long been a joke, because what principal is organized enough to keep track, let alone do anything about it besides yell egads in the hallway. (Apparently all school administrators are now Mr. Weatherbee from Riverdale High.)

But machines can take in a helluva lot of information and calculate what it says about students in all kind of ways. And is only too happy to remember this stuff for us.

So the EU banned these types of machine-led assessments for both workplaces and schools. You want AI to monitor how your employees or students are doing? Not on our continent. (The permissibility for governments, which could use it for such things as benefit distribution too, is less clear.)

Machine-led metrics will be a factor in personnel decisions, no way around it. They might even be helpful. But we probably don’t want any of them as the primary decider of who’s worthy of a job, or party invite, or government blacklist.

B. Facial recognition is not allowed, sort of, maybe, except when it is

Surveillance capitalism and Big Brother — not just for questionable movies anymore. Machines can now recognize faces with ease and then link what they see to real-life identities. We’re no longer technically constrained from creating giant databases in which any time you so much as walk out in public, someone with a vested interest in knowing everything about you could do so. (That sentence sounded paranoid after I wrote it, yet it’s as matter-of-fact as they come.)

Think CCTV, but instead of passively taking video, the system instantly knows who you are and where you’re going at all times.

None of this sounds like something most of us would want to live with. The EU agrees. The AI Act bans governments and law-enforcement agencies from building large facial recognition databases (despite many countries wanting carve-outs for their own cops). The EU has, though, made exceptions for “real time” uses to catch dangerous criminals — suspected terrorists, traffickers and the like.

Seems like a reasonable distinction. And yet….

How real time is “real time?” How do you stop facial recognition from being used on everyone in the environment where the suspect appeared? How do you even know who the suspect is if you don’t have a database?

Bothered by all these questions, Amnesty International objected to the provisions. The AI Act, the group said, “in effect greenlighted dystopian digital surveillance in the 27 EU Member States, setting a devastating precedent globally.” Yikes.

Or as German member of European Parliament Svenja Hahn, who was involved in the negotiations, said in Brussels-ese, "We succeeded in preventing biometric mass surveillance. Despite an uphill battle over several days of negotiations, it was not possible to achieve a complete ban on real-time biometric identification.”

C. AI media must be transparent

This one is really hard to regulate. So give the EU props for…trying?

One of the biggest concerns of this new AI world is distinguishing the real from the fake — AI-created videos will soon mimic factual ones to the point of indistinguishability. Bad actors will bad-act, and we need standards to protect us.

So the Europeans have come along to say that those behind the biggest AI systems must be transparent about how media came into being. As the New York Times writes, “makers of the largest general-purpose A.I. systems, like those powering the ChatGPT chatbot, would face new transparency requirements. Chatbots and software that creates manipulated images such as ‘deepfakes’ would have to make clear that what people were seeing was generated by A.I., according to E.U. officials and earlier drafts of the law.”

Sounds great. The problem is so many of the apps or individuals that use them won’t be under the same requirements. It’s like saying that Microsoft must disclose any lie that was composed in Word. Cool idea. Now who’s going to stop someone from using the software to pen a conspiracy theory?

The transparency law, I should note, will require companies to disclose when a customer is talking to a chatbot. Which, given how human-like these things are becoming, will actually come in handy.

D. Focus on the uses, not the tech

One of the biggest decisions made by the act’s authors is judging not how the technology works at its coding level but how it’s applied. Or, put another way, they will factor in risk.

So there might be comparatively little regulation for the AI that tools around in “low-risk” realms like shopping recommendations. But a lot of it in, say, medical arenas, because the risk is a lot greater there.

As a broad theoretical construct, this is great. Too much of the anti-regulation crowd has tried to deflect by saying you can’t regulate scientific advances. It’s true, you can’t — and shouldn’t. What should be assessed is the effects of the tech. This distinction sets the debate on the right terms.

But I worry that the act looks at these effects through the wrong lens. Right now the AI Act judges risk primarily by the gravity of the industry. Medical uses, for instance, with their high life-or-death stakes, will face a heap of regulation, while social recommendations will face very little. But of course the idea that dangers can’t come from more un-serious industries is silly and ahistorical (again, see under social media).

Conversely, the idea that medicine, with its serious stakes, should automatically get more scrutiny is wonky at the other end. The life-or-death consequence is also a reason in many cases why we wouldn’t want to slow AI down — too many people could be helped by the innovations in the years it takes for their creators to hurdle the red tape.

I realize these can be a lot of shades of nuance for a bureaucrat to parse (hey, that’s why they get the big bucks). But the better distinctions to be made here seem to be between outcomes — looking within medicine, for instance, to see if AI is being used in experimental instances to help serious or terminal cases and fast-tracking those, while leaving less urgent deployments to go through a more traditional process.

By the same token, we shouldn’t group all social or retail interactions in the regulation-lite bucket — if there are political uses, for instance, or concerns from mental-health experts, we might regulate these areas more strongly even if it just seems like harmless shopping or socializing fun.

Plus don’t forget that tech regulation meant to slow down juggernauts can have the opposite effect. As my sharp ex-WaPo colleague Cat Zakrzewski wrote (in regard to an earlier EU data-privacy law) “there are concerns that the law created costly compliance measures that have hampered small businesses, and that lengthy investigations and relatively small fines have blunted its efficacy among the world’s largest companies.”

The efforts the EU made here to build in variability should be appreciated; not all areas affected by AI are indeed the same. But I think when it comes to this new tech we need to see a lot more subtleties. We need to revise some of our old rubrics. We need to look not at only at the nature of industry but the perils of the outcome. And we need to act fast — the tech moves a lot quicker than governments. Not to do any of this would be, well, high risk.

[The Associated Press, Politico, Reuters and The Washington Post]

1. MACHINES HAVE WON “JEOPARDY!,” TAKEN DOWN CHESS CHAMPIONS AND RE-WRITTEN LEVITICUS AS A TAYLOR SWIFT SONG.

But have they pulled off the ultimate in computer achievements — passing the Turing Test?

I say that somewhat facetiously, as the worthiness of the Turing Test is more hotly debated than the best track on “Midnights.” Devised by the British computer scientist Alan Turing in 1950 (!), it has a human evaluator determining which of two anonymous text-based conversation partners is a machine and which is a human. If the evaluator knows which is the machine, the machine will have failed; if it doesn’t, the machine will have passed.

The test has long been controversial, not least because while it’s ostensibly a test of artificial intelligence, many have pointed out that all a computer needs to do to pass is mimic human speech through various forms of pattern arrangement, which is a far cry from actually being, you know, intelligent.

For decades this didn’t even matter, because no computer system could pass very often. But in recent years — and especially in the past year of ChatGPT — it seems like it could. Maybe. Could it?

This was the subject of debate between Sam Altman, who is of course behind said chatbot, and the AI expert Gary Marcus as they had it out on X over the past week.

Last weekend Altman posted:

Then Marcus replied:

Marcus was citing a research paper from just six weeks ago in which GPT-4 passed the Turing Test just 41 percent of the time. That’s better than the paltry marks set by other systems — but well short of the 63 percent that researchers calculated as the baseline for human intelligence.

Further, Marcus believes, as many AI researchers do, that even when machines ARE fooling the humans, they’re doing it with tricks that only make it seem like they’re rivaling us flesh-and-blooders.

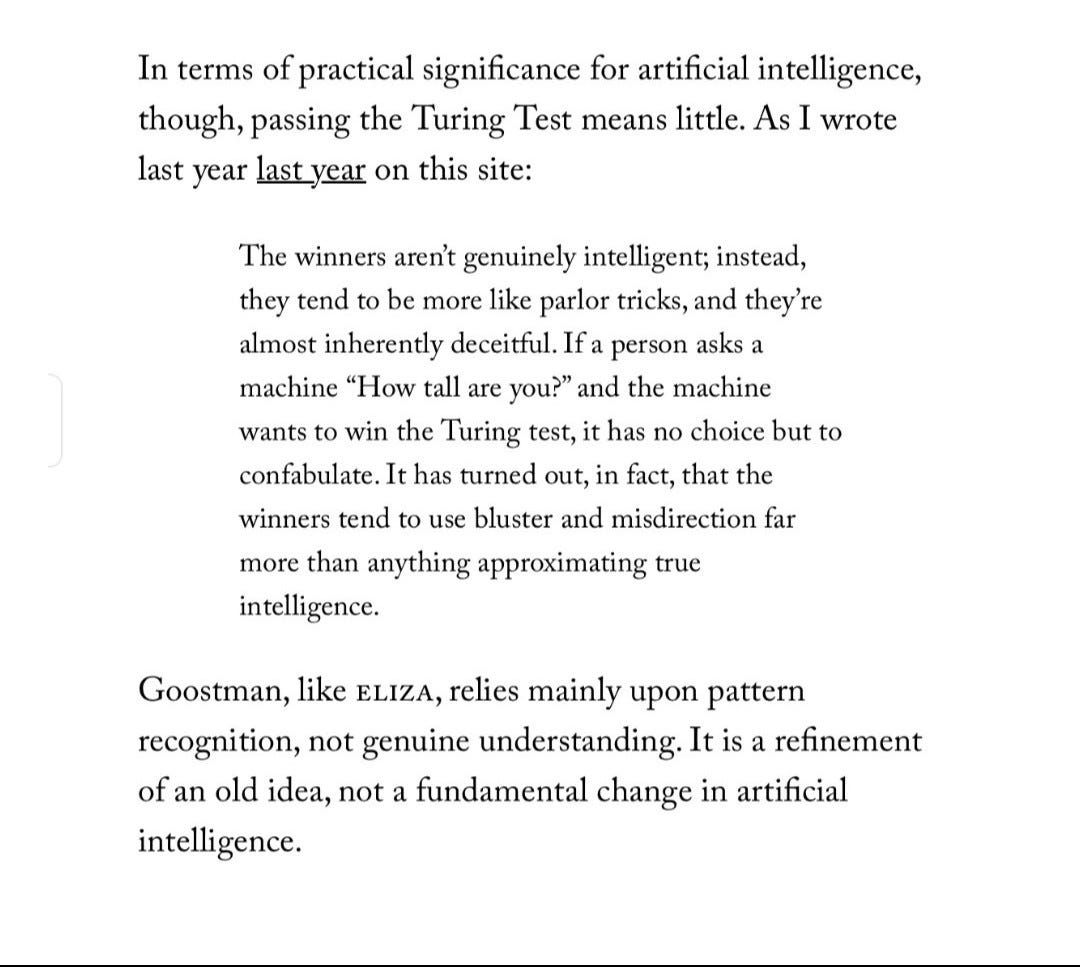

In a Substack newsletter he cited a New Yorker piece that he wrote a decade ago:

In other words, even passing isn’t significant, because the Turing Test isn’t very instructive. (Marcus instead suggests a “Comprehension Challenge” in which a machine watches an episode of television and gives a summary. And “no existing program…can currently come close to doing what any bright, real teenager can do: watch an episode of ‘The Simpsons’ and tell us when to laugh,” he concludes.)

As an abstract matter, Marcus is of course right. The Turing Test isn’t a very good measure of current machine tech — and hasn’t really been passed anyway.

And yet Altman’s initial post highlights why it unfortunately does matter. The Turing Test still has currency for the simple reason that it’s been around so long, and for the less-simple reason that people want to believe they are living in transformative times, making them devise (and game) markers that confirm this wish.

Once that happens, the issue of whether the test has actually been passed — let alone whether that means anything — goes by the boards. What becomes significant is the perception that AI is reaching new heights — it becomes significant in terms of venture-capital, in terms of cultural ferment, in terms of all the ways that optics turn into reality in our scroll-y impression-happy world.

God bless all the voices trying to bring reason to this; we need more of them. Many of them are heard. But Sam Altman is going to keep talking about the great heights his and other AI companies are reaching, and even the world’s strongest human intelligences will find it difficult to bring them down.

The Mind and Iron Totally Scientific Apocalypse Score

Every week we bring you the TSAS — the TOTALLY SCIENTIFIC APOCALYPSE SCORE (tm). It’s a barometer of the biggest future-world news of the week, from a sink-to-our-doom -5 or -6 to a life-is-great +5 or +6 the other way.

Here’s how the future looks this week:

A TROLL-Y MOVEMENT IS WHIPPING UP AI FULL-SPEED-AHEADISM : Of course. -2

THE EUROPEANS TAKE A MAJOR AI SAFETY ACTION: You don’t say…. +4.5

PEOPLE STILL WANT TO TELL THEMSELVES TURING-TEST MYTHS: -1.5